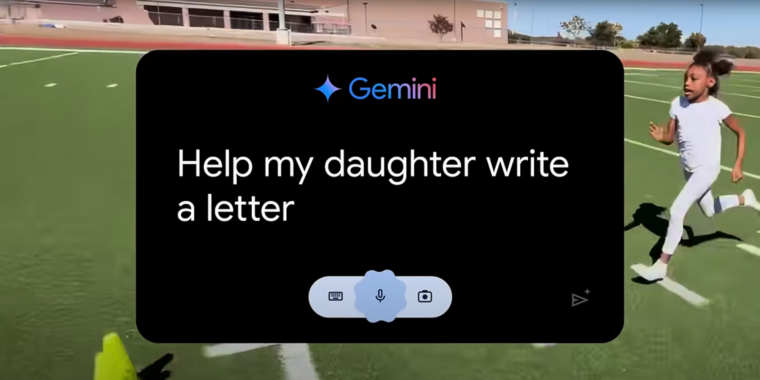

If you’ve watched any Olympics coverage this week, you’ve likely been confronted with an ad for Google’s Gemini AI called “Dear Sydney.” In it, a proud father seeks help writing a letter on behalf of his daughter, who is an aspiring runner and superfan of world-record-holding hurdler Sydney McLaughlin-Levrone.

“I’m pretty good with words, but this has to be just right,” the father intones before asking Gemini to “Help my daughter write a letter telling Sydney how inspiring she is…” Gemini dutifully responds with a draft letter in which the LLM tells the runner, on behalf of the daughter, that she wants to be “just like you.”

I think the most offensive thing about the ad is what it implies about the kinds of human tasks Google sees AI replacing. Rather than using LLMs to automate tedious busywork or difficult research questions, “Dear Sydney” presents a world where Gemini can help us offload a heartwarming shared moment of connection with our children.

Inserting Gemini into a child’s heartfelt request for parental help makes it seem like the parent in question is offloading their responsibilities to a computer in the coldest, most sterile way possible. More than that, it comes across as an attempt to avoid an opportunity to bond with a child over a shared interest in a creative way.

So in the spring I got a letter from a student telling me how much they appreciate me as a teacher. At the time I was going through some s***. Still am frankly. So it meant a lot to me.That was such a nice letter.

I read it again the next day and realized it was too perfect. Some of the phrasing just didn’t make sense for a high school student. Some of the punctuation.

I have no doubt the student was sincere in their appreciation for me, But once I realized what they had done It cheapened those happy feelings. Blah.

You should’ve asked Gemini what to feel about it and how to response…

That’s the problem with how they are doing it, everyone seems to want AI to do everything, everywhere.

It is now getting on my own nerves, because more and more customers want to have somehow AI integrated in their websites, even when they don’t have a use for it.

We created a society of antisocial people who are maximized as efficient working machines to the point of drugging the ones that are struggling with it.

Of course they want AI to do it for them and end human interactions. It’s simpler that way.

I’m curious, if they had gone to their parent, gave them the same info, and come to the same message… would it have been less cheap feeling?

And do you know that isn’t the case? “Hey mom, I’m trying to write something nice to my teacher, this is what I have but it feels weird can you make a suggestion?” Is a perfectly reasonable thing to have happened.

I think there’s a different amount of effort involved in the two scenarios and that does matter. In your example, the kid has already drafted the letter and adding in a parent will make it take longer and involve more effort. I think the assumption is they didn’t go to AI with a draft letter but had it spit one out with a much easier to create prompt.

… But why did it cheapen it when they’re the one that sent it to you? Because someone helped them write it, somehow the meaning is meaningless?

That seems positively callous in the worst possible way.

It’s needless fear mongering because it doesn’t count because of arbitrary reason since it’s not how we used to do things in the good old days.

No encyclopedia references… No using the internet… No using Wikipedia… No quoting since language and experience isn’t somehow shared and built on the shoulders of the previous generations with LLMs being the equivalent of a literal human reference dictionary that people want to say but can’t recall themselves or simply want to save time in a world where time is more precious than almost anything lol.

The only reason anyone shouldn’t like AI is due to the power draw. And nearly every AI company is investing more in renewables than anyone everyone else while pretending like data centers are the bane of existence while they write on Lemmy watching YouTube and playing an online game lol.

David Joyner in his article On Artificial Intelligence and Authenticity gives an excellent example on how AI can cheapen the meaning of the gift: the thought and effort that goes into it.

The assistant parallel is an interesting one, and I think that comes out in how I use LLMs as well. I’d never ask an assistant to both choose and get a present for someone; but I could see myself asking them to buy a gift I’d chosen. Or maybe even do some research on a particular kind of gift (as an example, looking through my gift ideas list I have “lightweight step stool” for a family member. I’d love to outsource the research to come up with a few examples of what’s on the market, then choose from those.). The idea is mine, the ultimate decision would be mine, but some of the busy work to get there was outsourced.

Last year I also wrote thank you letters to everyone on my team for Associate Appreciation Day with the help of an LLM. I’m obsessive about my writing, and I know if I’d done that activity from scratch, it would have easily taken me 4 hours. I cut it down to about 1.5hrs by starting with a prompt like, “Write an appreciation note in first person to an associate who…” then provided a bulleted list of accomplishments of theirs. It provided a first draft and I modified greatly from there, bouncing things off the LLM for support.

One associate was underperforming, and I had the LLM help me be “less effusive” and to “praise her effort” more than her results so I wasn’t sending a message that conflicted with her recent review. I would have spent hours finding the right ways of doing that on my own, but it got me there in a couple exchanges. It also helped me find synonyms.

In the end, the note was so heavily edited by me that it was in my voice. And as I said, it still took me ~1.5 hours to do for just the three people who reported to me at the time. So, like in the gift-giving example, the idea was mine, the choice was mine, but I outsourced some of the drafting and editing busy work.

IMO, LLMs are best when used to simplify or support you doing a task, not to replace you doing them.

This is exactly how I view LLMs and have used them before.

These people in these scenarios aren’t going ‘Amazon buy my gf a gift she likes.’

They’re going, please write a letter to my professor thanking them for their help and all they’ve done for me in biology.

I don’t know of anyone who trusts AI enough to just carte blanche fire off emails immediately after getting prompts back either.

The fear and cheapening of AI is the same fear and cheapening as every other advancement in technology.

It’s not a a real conversation unless you talk face to face like a man

say it in a groupwrite it on parchment and inkpen and papertypewritertelegramphonecalltext messagefaxemail. E: rip strikethroughs?It’s not a real paper if it’s a meta analysis.

It’s not it’s not it’s not.

All for arbitrary reasons that people have used to offset mundane garden levels of tedium or just outright ableist in some circumstances.

People also seriously overestimate their ability to detect AI writing or even pictures. That dude may very well have gotten a sincere letter without AI but they’ve already set it in their mind that the student wrote it with AI as if they know this student so well from 10 written assignments they probably don’t care about to 1 potentially sincerely written statement to them.

If people like that think it cheapens the value, that’s on them. People go on and on about removing pointless platitudes and dumb culturally ingrained shit but then clutch their pearls the moment one person toes outside the in-group.

It just feels so silly to me.

IT’S NOT ART UNLESS IT’S OIL ON CANVAS levels of dumb.

It’s not altruistic/good-natured unless you don’t benefit from it in any way and feel no emotion by doing it! You can’t help the homeless unless you follow the rules! You can’t give them money if you record it.

In the end, they still got that money. But somehow it devalues it because instead of raising two people up higher, you only raised one? It’s foolishness.

People also seriously overestimate other’s abilities and cheapen what their time is worth all the damn time.